eclipse上搭建hadoop開(kāi)發(fā)環(huán)境-創(chuàng)新互聯(lián)

一、概述

1.實(shí)驗(yàn)使用的Hadoop集群為偽分布式模式,eclipse相關(guān)配置已完成;

2.軟件版本為hadoop-2.7.3.tar.gz、apache-maven-3.5.0.rar。

二、使用eclipse連接hadoop集群進(jìn)行開(kāi)發(fā)

1.在開(kāi)發(fā)主機(jī)上配置hadoop

①將hadoop-2.7.3.tar.gz解壓到本地主機(jī)上

②使用windows版本的hadoop中的bin替換目標(biāo)中的bin文件夾

③配置windows上的hadoop環(huán)境變量

2.在eclipse上配置hadoop集群信息

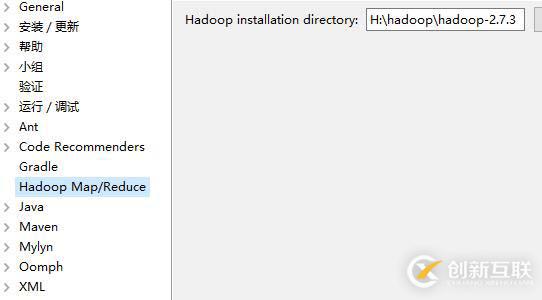

①在eclipse中添加hadoop路徑

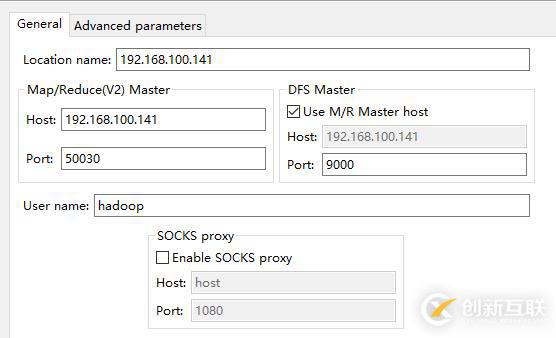

②配置hadoop集群訪問(wèn)信息

3.在hadoop集群中取消權(quán)限驗(yàn)證

hdfs-site.xml <property> <name>dfs.permissions</name> <value>false</value> </property>

4.創(chuàng)建一個(gè)文件測(cè)試連接權(quán)限

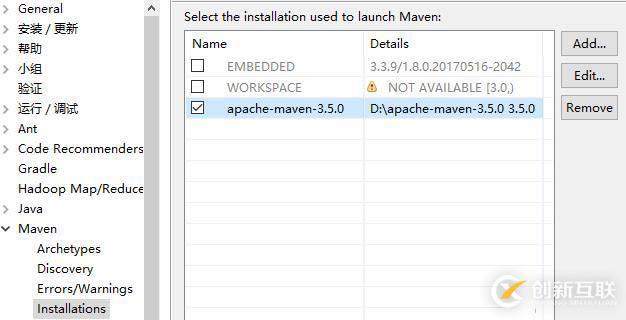

5.安裝maven

①將maven解壓到開(kāi)發(fā)主機(jī)上

②在eclipse上添加maven路徑

5.新建maven工程

6.修改maven配置文件(maven/pom.xml)

<dependencies> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>2.7.3</version> </dependency> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>3.8.1</version> <scope>test</scope> </dependency> </dependencies>

7.新建一個(gè)類用于測(cè)試(WordCount)

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

public static class TokenizerMapper

extends Mapper<Object, Text, Text, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context

) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer

extends Reducer<Text,IntWritable,Text,IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,

Context context

) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length < 2) {

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

Job job = Job.getInstance(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

for (int i = 0; i < otherArgs.length - 1; ++i) {

FileInputFormat.addInputPath(job, new Path(otherArgs[i]));

}

FileOutputFormat.setOutputPath(job,

new Path(otherArgs[otherArgs.length - 1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}8.配置WordCount

①將log4j.properties移動(dòng)到WordCount類下

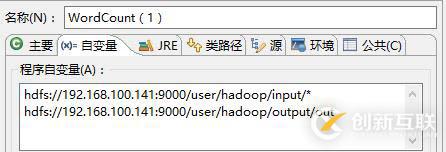

②設(shè)置WordCount的運(yùn)行自變量

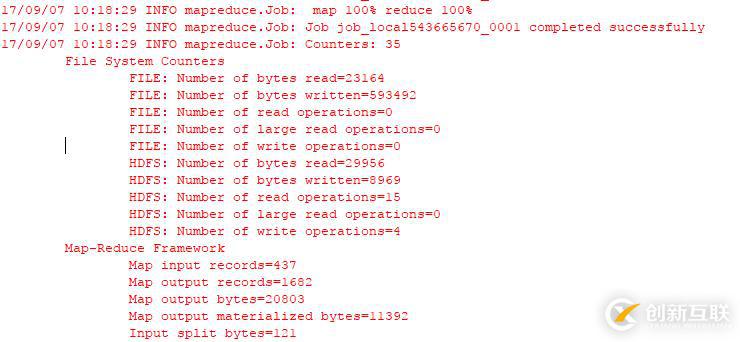

8.運(yùn)行測(cè)試

三、jar包的導(dǎo)出與提交執(zhí)行

1.導(dǎo)出WordCount

2.將導(dǎo)出的jar包上傳到hadoop集群

[hadoop@hadoop ~]$ ls wc.jar

3.運(yùn)行

[hadoop@hadoop ~]$ hadoop jar wc.jar WordCount /user/hadoop/input/* /user/hadoop/output/out 17/09/06 22:36:56 INFO client.RMProxy: Connecting to ResourceManager at hadoop/192.168.100.141:8032 17/09/06 22:36:57 INFO input.FileInputFormat: Total input paths to process : 1 17/09/06 22:36:58 INFO mapreduce.JobSubmitter: number of splits:1 17/09/06 22:36:58 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1504744740212_0001 17/09/06 22:36:59 INFO impl.YarnClientImpl: Submitted application application_1504744740212_0001 17/09/06 22:36:59 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1504744740212_0001/ 17/09/06 22:36:59 INFO mapreduce.Job: Running job: job_1504744740212_0001 17/09/06 22:37:36 INFO mapreduce.Job: Job job_1504744740212_0001 running in uber mode : false 17/09/06 22:37:36 INFO mapreduce.Job: map 0% reduce 0% 17/09/06 22:38:26 INFO mapreduce.Job: map 100% reduce 0% 17/09/06 22:38:42 INFO mapreduce.Job: map 100% reduce 100% 17/09/06 22:38:46 INFO mapreduce.Job: Job job_1504744740212_0001 completed successfully

4.查看運(yùn)行結(jié)果

[hadoop@hadoop ~]$ hdfs dfs -cat /user/hadoop/output/out/part-r-00000 "AS 1 "GCC 1 "License"); 1 & 1 'Aalto 1 'Apache 4 'ArrayDeque', 1 'Bouncy 1 'Caliper', 1 'Compress-LZF', 1 ……

另外有需要云服務(wù)器可以了解下創(chuàng)新互聯(lián)scvps.cn,海內(nèi)外云服務(wù)器15元起步,三天無(wú)理由+7*72小時(shí)售后在線,公司持有idc許可證,提供“云服務(wù)器、裸金屬服務(wù)器、高防服務(wù)器、香港服務(wù)器、美國(guó)服務(wù)器、虛擬主機(jī)、免備案服務(wù)器”等云主機(jī)租用服務(wù)以及企業(yè)上云的綜合解決方案,具有“安全穩(wěn)定、簡(jiǎn)單易用、服務(wù)可用性高、性價(jià)比高”等特點(diǎn)與優(yōu)勢(shì),專為企業(yè)上云打造定制,能夠滿足用戶豐富、多元化的應(yīng)用場(chǎng)景需求。

網(wǎng)站欄目:eclipse上搭建hadoop開(kāi)發(fā)環(huán)境-創(chuàng)新互聯(lián)

路徑分享:http://chinadenli.net/article26/dsjccg.html

成都網(wǎng)站建設(shè)公司_創(chuàng)新互聯(lián),為您提供網(wǎng)頁(yè)設(shè)計(jì)公司、用戶體驗(yàn)、面包屑導(dǎo)航、建站公司、網(wǎng)站建設(shè)、自適應(yīng)網(wǎng)站

聲明:本網(wǎng)站發(fā)布的內(nèi)容(圖片、視頻和文字)以用戶投稿、用戶轉(zhuǎn)載內(nèi)容為主,如果涉及侵權(quán)請(qǐng)盡快告知,我們將會(huì)在第一時(shí)間刪除。文章觀點(diǎn)不代表本網(wǎng)站立場(chǎng),如需處理請(qǐng)聯(lián)系客服。電話:028-86922220;郵箱:631063699@qq.com。內(nèi)容未經(jīng)允許不得轉(zhuǎn)載,或轉(zhuǎn)載時(shí)需注明來(lái)源: 創(chuàng)新互聯(lián)

猜你還喜歡下面的內(nèi)容

- 怎么在PHP中輸出多個(gè)元素的組合-創(chuàng)新互聯(lián)

- 怎么進(jìn)行分布式事務(wù)淺析-創(chuàng)新互聯(lián)

- Python文件操作函數(shù)的方法-創(chuàng)新互聯(lián)

- 怎么解決php頁(yè)面出現(xiàn)html亂碼?-創(chuàng)新互聯(lián)

- 計(jì)時(shí)器圖片古代沒(méi)有鐘表,你說(shuō)古人是用什么方式/方法來(lái)計(jì)時(shí)的呢?-創(chuàng)新互聯(lián)

- sql腳本與pg_restore命令怎么在postgreSQL中運(yùn)行-創(chuàng)新互聯(lián)

- com.tencent.mm指的是什么文件夾-創(chuàng)新互聯(lián)

- 成都SEO教你在SEO中運(yùn)用網(wǎng)站META標(biāo)簽優(yōu)化 2023-04-17

- 企業(yè)網(wǎng)站標(biāo)簽優(yōu)化有哪些作用? 2023-04-07

- 成都網(wǎng)站建設(shè)的網(wǎng)站首頁(yè)頭部title標(biāo)簽優(yōu)化 2016-08-10

- 網(wǎng)站標(biāo)簽優(yōu)化之網(wǎng)頁(yè)標(biāo)簽優(yōu)化技巧 2014-03-25

- 網(wǎng)站優(yōu)化中細(xì)節(jié):注意h1、h2、h3、strong標(biāo)簽優(yōu)化 2021-07-26

- seo圖片alt標(biāo)簽優(yōu)化技巧 2022-09-28

- 網(wǎng)站標(biāo)簽優(yōu)化技巧 2016-11-03

- SEO標(biāo)簽優(yōu)化,網(wǎng)站流量快速提升! 2023-04-04

- 成都網(wǎng)站建設(shè)技術(shù)篇:網(wǎng)站tag標(biāo)簽優(yōu)化思路分析原文鏈接 2022-11-06

- 網(wǎng)站標(biāo)簽優(yōu)化原則與技巧 2018-02-16

- h標(biāo)簽優(yōu)化的重要性有哪些? 2022-05-28

- 關(guān)于網(wǎng)站標(biāo)簽優(yōu)化你了解多少 2021-09-02